OpenClaw Is Building a Messaging Test Suite — Here's Why It Matters

OpenClaw creator Peter Steinberger just announced he's building a full end-to-end testing infrastructure for every messaging channel OpenClaw supports. This isn't a minor feature — it's a direct response to a wave of messaging regressions that have frustrated users for weeks.

Here's what's happening, why it matters, and what it means for anyone running an OpenClaw agent in production.

What Peter Actually Said

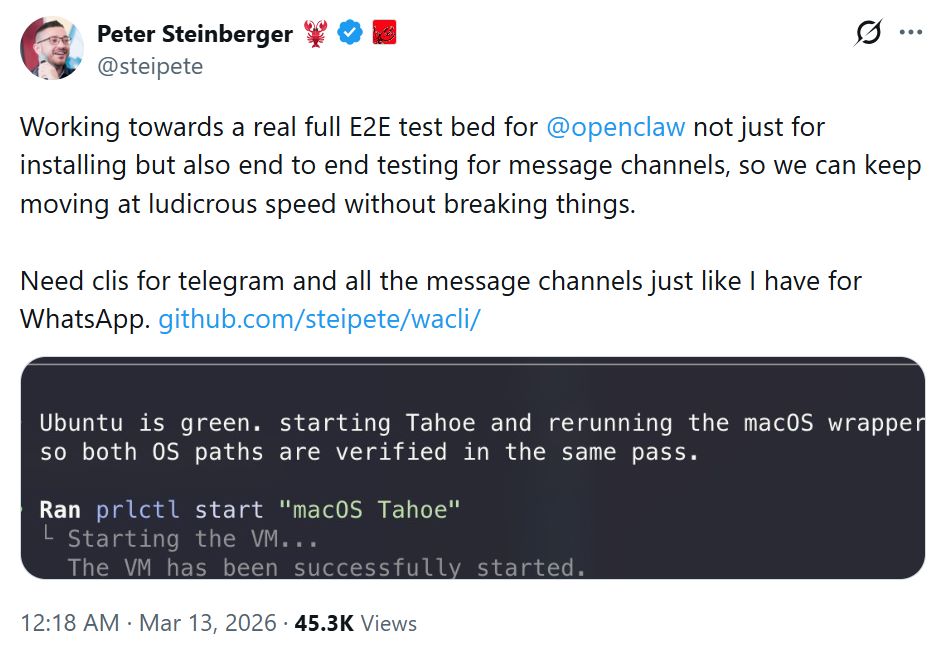

On March 12, Steinberger posted on X:

"Working towards a real full E2E test bed for @openclaw not just for installing but also end to end testing for message channels, so we can keep moving at ludicrous speed without breaking things. Need CLIs for telegram and all the message channels just like I have for WhatsApp."

Two key details stand out:

- He already has a WhatsApp CLI for testing. Telegram and other channels are next.

- He's building it in-house. When someone offered an external testing framework (Autonoma AI), Peter replied: "usually it's faster to build tools specifically tailored, esp since my clanker already built most of it."

This means OpenClaw's messaging infrastructure is about to get the same rigor as the core agent runtime — automated, scriptable, CI/CD-integrated testing across every channel.

Why This Is Happening Now

OpenClaw has been shipping at what Steinberger calls "ludicrous speed" — multiple releases per week, major features landing in days. But that velocity has come at a cost: messaging channels keep breaking after updates.

A look at recent GitHub issues tells the story:

🔴 Issue #36739 — Telegram Multi-Account Regression (v2026.3.2) After upgrading, only the default Telegram bot account processed messages. Secondary accounts connected successfully (blue checkmarks appeared for senders) but OpenClaw silently dropped every incoming message. No logs, no errors, no responses.

🔴 Issue #33854 — Intermittent Telegram Delivery Failure (v2026.3.3) Agent replies in Telegram group topics stopped arriving at the client — even though the agent completed its turn and the response showed up in OpenClaw's Web UI. The message just vanished between the gateway and Telegram.

🔴 Issue #29238 — Telegram Group Messages Silently Dropped Gateway received group messages (confirmed via direct Bot API polling) but never routed them to bound agents. No error logs. Users discovered the problem hours later when they noticed their agents had gone silent.

🔴 Issue #6402 — Wrong Bot Delivers Messages After Restart With multiple Telegram bots configured, a gateway restart caused responses to be delivered through whichever bot connected first — not the bot associated with the originating session. Agent A's reply would appear in Agent B's chat.

These aren't edge cases. They're core messaging reliability failures — the kind where your agent does its job perfectly but the user never sees the result.

What the Community Is Already Doing

The community hasn't been waiting around. A Reddit user (csbaker80) open-sourced an E2E test suite with ~95 tests across 10 categories that validates an entire OpenClaw deployment in under 2 minutes. It covers:

- 🔧 Core (7 tests): Gateway health, HTTP, version, CPU, memory

- ⚙️ Config (20 tests): Schema compliance, model format, provider validation

- ⏰ Cron (13 tests): Delivery fields, channels, schedule verification

- 🔌 Plugins (5 tests): Registration, loading, initialization

Pure bash, no dependencies beyond bash, curl, and python3. It catches the infamous delivery.target vs delivery.to bug that has bitten countless users.

But this community tool tests the deployment — not the live message flow. That's the gap Peter's testbed aims to close: verifying that a message sent via Telegram actually reaches the agent and the response actually makes it back to the user.

What This Means for OpenClaw Users

Short term: Expect messaging reliability to improve significantly in coming releases. Once the testbed is running, regressions like #36739 would be caught before they ship.

Medium term: The "messaging as reliable software" approach signals OpenClaw is maturing from a fast-moving open-source project into production-grade agent infrastructure. Every messaging channel becomes a first-class citizen with automated verification.

For teams running agents in production: This is exactly the kind of infrastructure investment that separates a weekend experiment from a system you can depend on. But building and maintaining your own OpenClaw deployment means you're still the one dealing with update regressions, gateway restarts, and channel configuration debugging until the testbed catches up.

Skip the Setup, Not the Ecosystem

The testbed Peter is building is great news — once it's battle-tested across every channel, self-hosted reliability will take a leap forward. But even with better testing upstream, self-hosting still means managing your own server, handling updates, configuring gateway and channels, and debugging when things go sideways.

That's the real time sink for most users — not the OpenClaw bugs themselves, but the operational overhead of running your own instance 24/7.

MyClaw.ai — the #1 OpenClaw host — eliminates that overhead entirely: one-click cloud deployment, 24/7 uptime, every OpenClaw version maintained and tested for compatibility, plus 10% off frontier models like Claude Opus 4.6 and GPT-5.4. It's the best way to run OpenClaw if you'd rather focus on what your agent does than how it's deployed.

To be clear: if an upstream OpenClaw bug breaks Telegram, it breaks Telegram everywhere — managed or not. MyClaw isn't a magic patch for OpenClaw's codebase. What it does remove is the hours of setup, maintenance, and "why did my gateway crash at 3 AM" debugging that most users would rather skip.

Bottom Line

Peter Steinberger publicly acknowledging the messaging reliability gap — and committing to solve it with proper testing infrastructure — is a sign of maturity for the OpenClaw project. The fact that he's building channel-specific CLIs for automated testing shows he understands the problem isn't just bugs — it's the lack of infrastructure to prevent them.

For the open-source community, this means better releases. For everyone else, the question isn't whether OpenClaw will get more reliable — it will. The question is whether you want to manage that journey yourself or use MyClaw.ai — the best way to run OpenClaw — and focus on what your agent actually does.

Skip the setup. Get OpenClaw running now.

MyClaw gives you a fully managed OpenClaw (Clawdbot) instance — always online, zero DevOps. Plans from $19/mo.